May 14, 2026 • 16 min read

AI governance stats for 2026: Adoption, risk, and the oversight gap defining the year

Optro staff

In the past 12 months, 40% of organizations have reported inaccurate AI outputs, and 22% faced legal claims tied to AI use — all while their governance programs remain in the process of formalization (Optro, The AI oversight gap).

AI governance programs are still taking shape at most organizations. The incidents tied to AI use are not waiting for them to finish. Data breaches, regulatory actions, and legal claims tied to AI use have already materialized across industries. The stakes have shifted from theoretical to material, and 2026 is the year the gap between AI deployment and AI oversight becomes a board-level liability.

Below is the complete breakdown of the latest AI governance stats and what they mean for GRC, audit, and risk leaders.

Top AI governance stats for 2026 (at a glance)

The figures below are the highest-signal data points from the broader analysis that GRC leaders need to know in 2026. Each stat appears once in this article — here for quick reference, and in context further down.

- 85% of organizations have integrated AI into core operations or deployed it across multiple functions, but only 25% report comprehensive visibility into employee AI use (Optro, The AI oversight gap).

- Spending on AI governance platforms is expected to reach $492 million in 2026, according to Gartner.

- 76% of surveyed organizations now have a Chief AI Officer, up from just 26% in 2025 (IBM).

- Close to three-quarters of companies plan to deploy agentic AI within two years, but only 21% report a mature model for agent governance (Deloitte).

- 78% of enterprises are unprepared for EU AI Act obligations (Vision Compliance).

AI adoption and visibility stats

The AI deployment picture is largely settled. It is embedded across functions, vendors, and daily decisions of individual employees, often without centralized oversight. The result is what we call the exposure gap — the distance between where AI is operating and where governance can see it.

- 41% of organizations say AI is central to long-term business planning, and 34% report enterprise-wide alignment on AI strategy (Optro, The AI oversight gap).

- 35% of organizations describe shadow AI as pervasive or widespread, with another 45% characterizing it as moderate in prevalence (Optro, The AI oversight gap).

- 42% of companies believe their strategy is highly prepared for AI adoption, yet feel less prepared in terms of infrastructure, data, risk, and talent (Deloitte).

- 58% of companies report at least limited use of physical AI today, a figure expected to reach 80% within two years (Deloitte).

Takeaway: The Deloitte figures are worth considering. Deployment intent is outrunning readiness across multiple levels simultaneously, and organizations that feel most prepared strategically are often least prepared operationally. That gap is where the incident data picks up.

AI risk and incident stats

Across organizations with active AI deployments, inaccurate outputs, data breaches, regulatory actions, and legal claims are materializing – often within the same organizations and in the same 12-month window.

- 34% of organizations cite inputting sensitive data into AI systems as the top employee risk concern, and 21% say insufficient training accounts for the majority of risky behavior, not malicious intent (Optro, The AI oversight gap).

- 61% of respondents report a year-over-year increase in AI-enabled social engineering, and 42% say they have already experienced a successful incident (Optro, The AI oversight gap).

- 74% of respondents identify inaccuracy and 72% cite cybersecurity as highly relevant AI risks (McKinsey).

- Nearly 60% of organizations that experienced an AI incident rate their response as satisfactory or negative, signaling a confidence gap in incident-response readiness (McKinsey).

- 36% of executives report strengthening risk management in direct response to AI-driven disruption and uncertainty (PwC).

Takeaway: Employee behavior has become the most active and least governed risk surface, and external attackers exploit the same visibility gaps.

Ownership, accountability, and governance maturity stats

The incident data above points to a structural problem. Policy cannot fix what architecture has broken. When AI governance responsibility is too widely distributed, no single function has the authority to act.

- No single function owns more than a quarter of AI governance responsibility — IT (25%), risk management (18%), cross-functional arrangements (17%), and dedicated AI governance teams (10%) all share fragmented control (Optro, The AI oversight gap).

- 58% of leaders believe their governance controls are keeping pace with AI adoption, but only 18% have active mitigation covering most or all identified risks, and only 19% can identify cross-functional risks in real time (Optro, The AI oversight gap).

- 64% of surveyed CEOs say they are comfortable making major strategic decisions based on AI-generated input (IBM).

Takeaway: When no single function owns more than a quarter of governance responsibility, accountability doesn't get shared, it gets lost. Meanwhile, major strategic decisions are being made on AI-generated input inside organizations, where fewer than one in five can identify cross-functional risks in real time.

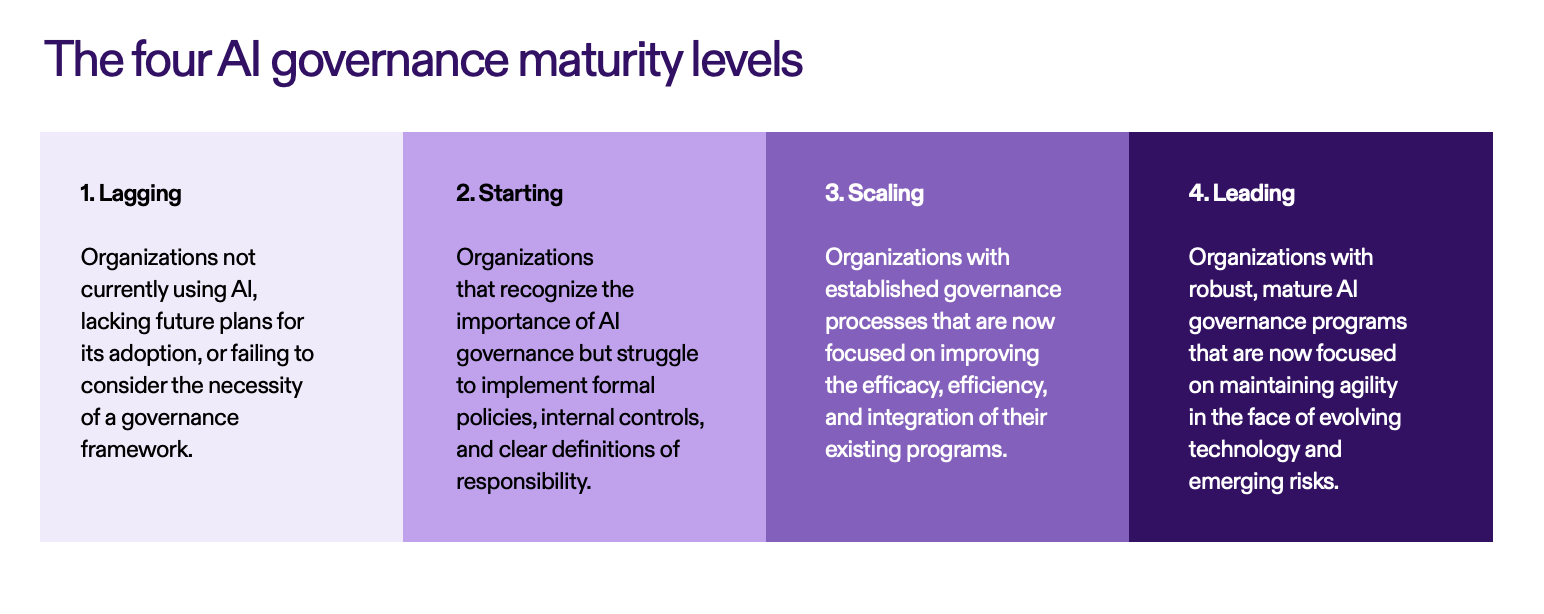

AI governance maturity by level

The data shows that AI governance maturity is advancing unevenly. Only 34% of organizations report their programs are strategic and continuously improving (Optro, The AI oversight gap).

Source: Optro, The AI oversight gap.

Governance components in place

Roughly half of organizations have the high-level documentation done, but the components most directly linked to risk detection remain in the minority.

Component | Share of organizations with this in place |

|---|---|

AI usage policies and data governance | 50% |

Employee AI training | 48% |

Model inventory | 34% |

Bias and fairness testing | 21% |

AI red teaming | 17% |

Source: Optro, The AI oversight gap.

The cost and ROI of AI governance in 2026

Money, time, and ROI are the metrics that move budgets. The data shows governance maturity is now a direct predictor of financial return. The dividing line between organizations that will see returns and those that won’t last has less to do with how much they spend, and more to do with whether governance has been operationalized as a system of action.

- 72% of organizations expect GRC technology budgets to increase, with AI governance solutions (43%), regulatory compliance tools (41%), and GRC platform upgrades (38%) ranking as the top three investment priorities (Optro, The AI oversight gap).

- Organizations that deployed AI governance platforms are 3.4 times more likely to achieve high effectiveness in AI governance than those that did not (Gartner).

- Only 12% of CEOs say AI has delivered both cost and revenue benefits, while 56% report no significant financial benefit to date (PwC).

- CEOs whose organizations have established strong AI foundations are three times more likely to report meaningful financial returns (PwC).

- 38% of executives report increasing AI investment to capture new opportunities (PwC).

Takeaway: Budgets are not the constraint. The dividing line between AI leaders and laggards is whether governance has been operationalized as a system of action or left as a documentation exercise. Strong foundations — responsible AI frameworks, automated policy enforcement, unified risk visibility — correlate directly with ROI.

Industry-specific AI governance signals

Regulatory exposure and AI deployment patterns vary significantly by sector. These stats capture where the pressure is most acute.

1. Financial services and insurance

Financial services represented 17% of respondents in the Optro study, and insurance another 8% (Optro, The AI oversight gap). The sector faces compounded pressure from rising AI-enabled fraud and a fragmented multi-jurisdictional regulatory landscape. With 42% of organizations having already experienced a successful social engineering incident (cited earlier), banks and insurers are prioritizing AI-enabled social engineering ahead of ransomware in 2026.

2. European enterprises (cross-sector)

EU enforcement is reshaping the readiness conversation across every regulated industry operating in the bloc. In fact, 74% of 50 European AI companies analyzed are classified as high-risk under the EU AI Act, yet 96% have no public position on compliance (Sprinkling Act).

3. Manufacturing, logistics, and industrials

Industrial and manufacturing made up 10% of respondents in the Optro study, with transportation and logistics adding another 2% (Optro, The AI oversight gap). These sectors lead the surge in physical AI deployment, where governance must extend beyond software to hardware, edge devices, and human-in-the-loop controls.

4. Technology and professional services

Technology was the largest industry segment in the Optro research at 18% of respondents, with business and professional services at 6% (Optro, The AI oversight gap). These sectors are also where agentic AI is being procured first — and where the governance gap between deployment intent and oversight maturity is most exposed.

Future forecasts: AI governance predictions for 2027

Looking ahead, three forecasts will define the next 12 months of AI governance work.

- The agentic AI governance gap will widen before it closes. With close to 75% of companies planning to deploy agentic AI within two years but only 21% reporting mature agent governance (Deloitte), most organizations will be governing systems that act without a human prompt using controls designed for supervised tools.

- EU AI Act enforcement will trigger a wave of remediation spending. With 78% of enterprises unprepared for their obligations (Vision Compliance), the back half of 2026 and into 2027 will see compressed timelines for control mapping, evidence generation, and regulatory disclosure.

- The CAIO role will mature from appointment to accountability. The jump from 26% to 76% CAIO adoption in a single year (IBM) means 2027 will be the year boards begin holding these leaders accountable for measurable governance outcomes, not just program existence.

Conclusion: How to respond to these AI governance trends

Across every section of this analysis, the data points to the same structural condition: AI deployment has outpaced AI governance at nearly every level of the enterprise. The exposure gap is real, the human layer is the most active risk surface, and ownership remains too distributed to act decisively. The organizations that emerge with competitive and regulatory advantage will not be the ones that govern most cautiously — they will be the ones that govern structurally.

Three actions GRC, audit, and risk leaders should take now:

- Move policy enforcement into workflows. Acceptable-use controls and training should live where employees make decisions, not in documents they review once a year.

- Consolidate fragmented governance technology. A patchwork of point solutions cannot deliver the real-time, cross-functional visibility needed to govern AI at the speed it now operates.

- Build for agentic AI now. Inventory the agentic AI footprint, define human oversight thresholds, and redesign controls that currently assume a human initiator — before deployment scales.

Ready to close the AI oversight gap? Explore Optro's AI governance solution — purpose-built for GRC teams operationalizing AI controls within a connected risk platform.

Frequently asked questions

What is the most common AI governance challenge in 2026?

Visibility. Only 25% of organizations have comprehensive visibility into how employees use AI, and 35% describe shadow AI as pervasive or widespread (Optro, The AI oversight gap). The exposure gap between AI deployment and AI oversight is the single largest source of downstream incidents.

How much are organizations spending on AI governance?

Spending on dedicated AI governance platforms is projected to reach $492 million in 2026, according to Gartner. Separately, 72% of organizations expect their broader GRC technology budgets to increase, with AI governance solutions ranking as the top investment priority (Optro, The AI oversight gap).

How many companies are ready for the EU AI Act?

Not enough. According to Vision Compliance, 78% of enterprises are unprepared for their obligations under the EU AI Act, and 96% of European high-risk AI firms have no public position on compliance (Sprinkling Act).

What is agentic AI, and why does it matter for governance?

Agentic AI describes systems in which multiple agents operate and coordinate work autonomously, executing multi-step tasks without a human in the decision loop for every step. It matters because close to three-quarters of companies plan to deploy agentic AI within two years, but only 21% have a mature governance model for it (Deloitte). Controls built for supervised AI tools will not govern systems that act without a prompt.

About the authors

Optro is the leading AI-powered GRC platform, transforming the way the world’s biggest companies manage risk. More than 50% of the Fortune 500 trust Optro to elevate their audit, risk, and compliance management.

You may also like to read

Best AI governance software in 2026

AI risk management: Frameworks, threats, and controls

How to unify your data and AI governance policies

Best AI governance software in 2026

AI risk management: Frameworks, threats, and controls

Discover why industry leaders choose Optro

SCHEDULE A DEMO